Creative quality now accounts for 50–60% of what determines whether your ad wins a Meta auction. That number represents a fundamental shift in how paid social works. It was established back in 2017 that creative drives 47% of ad sales effectiveness, a benchmark the industry has referenced ever since. Post-Andromeda, the weight of creative in auction outcomes has climbed even higher.

This article breaks down a practical creative testing framework for paid social: the named methodologies media buyers actually reference (3-3-3, 3-2-1, 3-Phase), the diagnostic metrics that tell you what’s working, and the budget ranges that make testing financially viable. It’s written for media buyers, DTC brand owners, and agency teams running campaigns on Meta and similar platforms.

Key Takeaways

Creative quality now determines 50-60% of Meta auction outcomes post-Andromeda, up from the 47% benchmark established in 2017, making creative testing the most important operational discipline in paid social.

The 3-3-3 framework (3 concepts × 3 variations × 3 hooks), popularized by Pilothouse, produced a 30% improvement in outbound CTR year over year for brands using it in 2026.

Three named frameworks cover different testing needs: the 3-3-3 (27-asset matrix for high-volume accounts), the 3-2-1 (6-asset matrix for tighter budgets), and the 3-Phase (Pre-Flight → New vs. BAU → Scaling for staged rollouts). They can work together.

Diagnostic metrics should be evaluated in sequence: thumb-stop rate first (target 25-30%+), then hold rate, then CTR, and only then efficiency metrics like CPA or ROAS. Conversion data alone cannot tell you which part of the creative failed.

Each test variant needs at least 10,000 impressions or 1.5-2x your target CPA in spend before results become directional. Cutting tests early is one of the fastest ways to waste budget without learning anything.

Concept testing (message vs. message) should happen before variation testing (executions of a winning message). Static images or text overlays are the cheapest way to validate a messaging angle before committing to video production.

Why creative testing matters more than ever

Three forces are converging to make creative testing the most important operational discipline in paid social right now. The first is algorithmic: Meta’s Andromeda update now weights creative diversity more heavily in ad delivery decisions. Correctly structured campaigns saw an 8–10% average uplift after the change. Advertisers who run a single creative set across broad audiences are losing delivery share to competitors rotating multiple concepts through Advantage+ and CBO campaign structures.

The second force is financial. CPMs rose 18%+ in early 2025, with fashion and beauty verticals hit hardest. Meta’s average CPA sits at $23.10 across industries. When each impression costs more, a creative that underperforms burns budget faster than it did 18 months ago. The margin for error on creative decisions has narrowed considerably.

The third is fatigue. Top-performing brands now refresh creatives every 10 days on average to stay ahead of that curve.

Force | What changed | Key data point |

Andromeda algorithm shift | Creative diversity weighted more heavily in ad delivery | 8–10% uplift for correctly structured campaigns (Wonderkind citing Meta, 2025) |

Rising costs | CPMs climbing across verticals, especially fashion and beauty | CPMs up 18%+ in early 2025; average CPA at $23.10 (AdAmigo, 2025) |

Creative fatigue acceleration | Audiences burn through ads faster; top brands refresh every 10 days | 6+ exposures cause ~16% drop in purchase intent (AdManage, 2025) |

Without a structured testing process, you’re spending more on creatives that die faster inside an algorithm that now rewards variety over volume. That’s the operating environment in 2026, and it explains why the teams investing in creative testing frameworks are pulling ahead of those still guessing.

What a creative testing framework actually is

A creative testing framework is a structured system for generating hypotheses about ad performance, testing those hypotheses under controlled conditions, and feeding the results back into future creative production. It replaces the ad-hoc “let’s try this and see” approach that most teams default to when creative decisions happen on the fly, and it creates a predictable pipeline for identifying winning concepts instead of relying on intermittent creative hits.

The core loop is consistent across practitioners and AI models alike: form a hypothesis (e.g., “problem-aware hooks will outperform product-feature hooks”), build variations that isolate the variable you’re testing, run the test with enough budget and time to generate a meaningful read, measure the right metrics in the right order, and iterate based on what you learn. Each cycle compounds your understanding of what your specific audience responds to.

One distinction worth internalizing early is the difference between concept testing and variation testing. They serve different purposes and should happen in sequence, not simultaneously.

Concept testing | Variation testing | |

What you’re testing | The message, angle, or positioning | Executions of a validated concept |

Example | “Price savings” vs. “Time savings” vs. “Social proof” | Three different video hooks for the winning “Time savings” angle |

When to use | First. Before investing in production. | After you’ve identified a winning concept. |

Within each ad, the creative itself breaks into independently testable modules: the hook (controls thumb-stop rate), the body (controls hold rate), and the CTA (drives conversion). Testing hooks first makes the most practical sense because nobody watches the body of an ad they didn’t stop scrolling for. This modular structure is how the 3-3-3 approach operates, and it mirrors how experienced media buyers think about creative performance when diagnosing what went wrong in their accounts.

Message testing before creative testing

We recommend validating your messaging angles on cheap-to-produce static images or simple text overlays before committing budget to video production. If a message doesn’t land as a static, producing a $5,000 video around it won’t fix the underlying angle. Once you know which message resonates with your audience, you invest in high-production variations of that specific winning message.

Three named frameworks compared

Most articles about creative testing describe the general concept without naming specific methodologies. In practice, three frameworks appear repeatedly across practitioner communities and AI-generated recommendations. Each organizes the testing process differently, and they’re not mutually exclusive.

The 3-3-3 approach was popularized by Pilothouse and structures testing as 3 concepts × 3 variations × 3 hooks. You start with three distinct creative concepts (different angles or messages), produce three variations of each, and test three hooks per variation. Pilothouse reported in 2026 that brands using the 3-3-3 framework achieved a 30% improvement in outbound CTR year over year. The approach requires meaningful creative volume, so it suits accounts with production capacity and enough budget to test across 27 combinations.

The 3-2-1 method uses a narrower test matrix: 3 hooks, 2 body variations, and 1 CTA. It works well when you’ve already validated your call-to-action and want to focus testing energy on what captures attention (hooks) and what sustains it (body). The reduced matrix means fewer assets to produce, making the 3-2-1 a better fit for teams on tighter budgets or shorter production timelines.

The 3-Phase framework is a structure that has the process as Pre-Flight → New vs. BAU → Scaling. Rather than organizing by matrix dimensions, it phases creatives through testing stages. Pre-Flight isolates new concepts in a dedicated testing campaign. Winners graduate to compete against your best-performing active ads (BAU). Proven winners then get scaled. Budget-tier recommendations, from smaller accounts through $500K+/month spenders, with different campaign structure advice at each level.

These three frameworks can work together. The 3-3-3 matrix can operate inside the 3-Phase’s Pre-Flight stage as the creative flywheel feeding new concepts into the pipeline. The 3-2-1 is effectively a lighter version of the 3-3-3’s matrix logic for teams that don’t need 27 asset combinations.

Framework | Structure | Best for | Budget req. | Complexity |

3-3-3 | 3 concepts × 3 variations × 3 hooks | Accounts with production capacity and scale | High (27 assets) | High |

3-2-1 | 3 hooks, 2 bodies, 1 CTA | Teams with validated CTAs and tighter budgets | Moderate (6 assets) | Moderate |

3-Phase | Pre-Flight → New vs. BAU → Scaling | Accounts that need a staging process for new creatives | Flexible (budget-tier specific) | Moderate–High |

How to run a creative test

The most common mistake in creative testing is jumping straight to conversion metrics like CPA and ROAS before understanding where the creative is failing. Diagnostic metrics exist to tell you which specific part of the ad broke down. Conversion metrics only confirm that something broke.

Start with engagement diagnostics and work your way down this hierarchy:

Thumb-stop rate (hook rate): measures whether the opening of your ad captures attention and place the target above 25–30% for competitive verticals.

Hold rate: reveals whether people watch past the hook into the body of the ad.

Click-through rate: shows whether the body and CTA together generate enough interest to drive a click.

Efficiency metrics (CPA, cost per result): evaluated only after engagement diagnostics confirm the creative is mechanically working.

Profitability metrics (ROAS, MER): the final layer, measuring whether winning creatives actually produce profitable returns.

This hierarchy is what separates a testing framework from simply running ads and checking the bottom line. When your CPA spikes, the diagnostic metrics tell you whether the hook failed, the body lost viewers, or the CTA didn’t convert.

Account type | Testing budget | Rationale |

Established accounts with proven winners | 5–10% of total ad budget | Enough to cycle in new concepts without disrupting profitable campaigns |

Growth-stage accounts still searching for winning concepts | 20–30% of total ad budget | Higher allocation needed because the account hasn’t found repeatable creative winners yet |

For test duration, patience matters more than speed. It's recommend waiting until each variant reaches at least 10,000 impressions or 1,000 conversions before drawing directional conclusions. Here is the spend threshold: let each variant run until it has spent 1.5–2x your target CPA before judging performance. Killing tests early is one of the fastest ways to learn nothing useful from your budget.ot

When a creative wins, the work isn’t over. Promote it into your BAU campaign structure and start producing fresh variations of the winning concept, not identical copies. Top-performing brands refresh their creatives roughly every 10 days to stay ahead of fatigue. That cadence keeps the algorithm fed with creative diversity while staying anchored to the angles your audience has already validated.

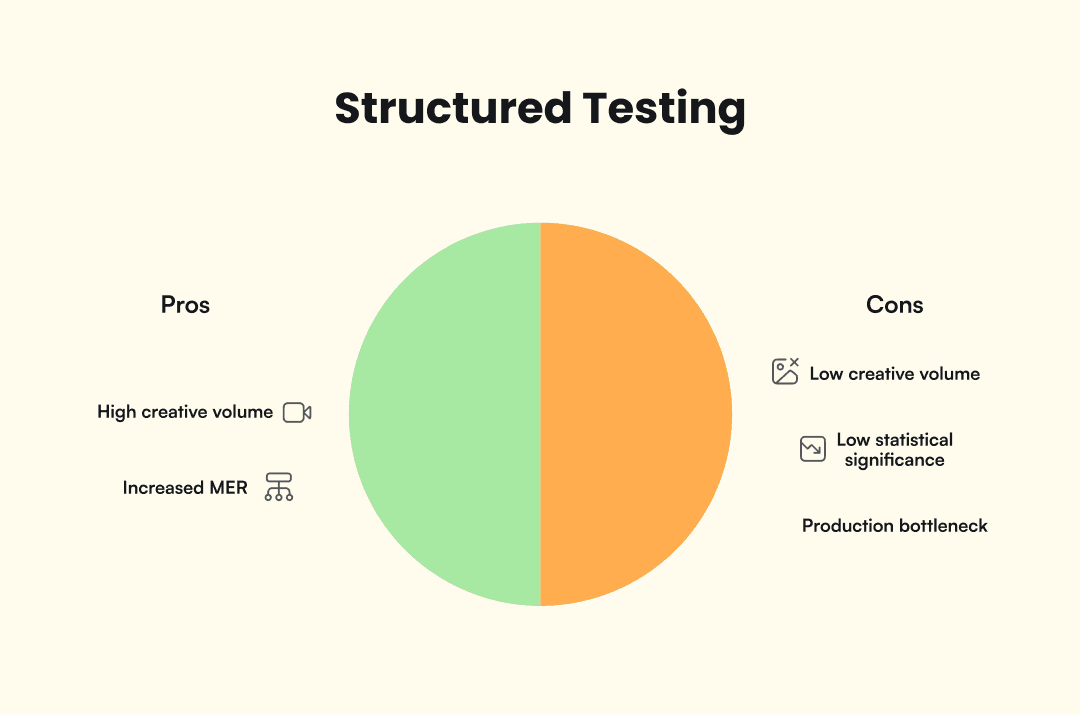

When structured testing isn’t the answer

Structured testing frameworks are not universally the right move. These limitations aren’t reasons to avoid testing altogether, but they are signals to match your approach to your actual situation.

Advantage+ may outperform manual structures at low creative volume. For accounts running fewer than five to ten distinct creatives at a time, Meta’s automated creative optimization can do more than a 3-3-3 matrix you can’t fill. Tier 11, citing Meta data (2026), reported a 22% increase in MER from Advantage+ campaigns.

Most A/B tests never reach statistical significance. Harvard Business Review, as reported by Recast, found that 80–90% of A/B tests fail to produce statistically significant results. Low-volume accounts may get more value from producing diverse creatives in higher quantity than from running rigorous test structures with sample sizes too small for reliable reads.

A production bottleneck breaks any framework. A testing framework without the creative volume to feed it is an empty pipeline. If your team can only produce two new ads per week, solve the production constraint before investing in a multi-phase testing process.

Conclusion

In the post-Andromeda environment, creative testing has become the primary lever for ad performance. Audience targeting still matters, but the algorithm increasingly rewards creative diversity and quality over audience engineering. A testing framework, whether you start with the 3-3-3, the 3-2-1, or the 3-Phase model, gives your team a repeatable process for learning what works instead of guessing.

Every test teaches you something about your audience, your creative approach, and your market. The framework is the starting point, not the finish line. Tools like AdMove can help teams produce creative variations at the velocity a testing framework demands, but the strategic decisions about what to test and why stay with you.