The AI avatar market is projected to grow from $0.80 billion in 2025 to $5.93 billion by 2032, a 33.1% compound annual growth rate. That growth reflects a practical shift in how companies produce video content, not just a technology trend. By 2026, an estimated 55% of businesses had adopted AI video tools, and AI avatars are driving much of that adoption because they solve a specific problem: getting presenter-led video produced quickly and affordably.

An AI avatar is a digital representation powered by artificial intelligence that can speak, express emotion, and interact with people. Unlike a static profile picture or cartoon character, an AI avatar combines generative AI with text-to-speech technology to produce video that looks and sounds human without requiring a camera, a studio, or an actor. For marketers, that means producing dozens of video variations in the time a traditional shoot takes to deliver one.

This guide covers what AI avatars are, how the technology works, the different types available, where businesses use them across marketing, customer service, and training, what they cost compared to traditional video production, and how to evaluate the ethical and legal questions that come with them.

What Is an AI Avatar?

The term “avatar” has been around since the early days of the internet, when it meant a simple image representing a user in a chatroom or game. AI avatars are a different category entirely. An AI avatar is a digital human powered by machine learning that can speak, move, and display facial expressions in real time. Three criteria separate an AI avatar from any other type of digital character:

Visual embodiment: a realistic or stylized human form

AI-driven intelligence layer: generative AI and deep learning models that control behavior

Communication ability: the avatar can deliver speech with lip-sync accuracy and express emotion through movement and tone

In practice, this means an AI avatar receives a text script, processes it through multiple AI models, and outputs a video of a digital human speaking those words with matching lip movements, gestures, and expressions. The technology goes well beyond rendering a static face. Deep learning models predict how a person’s mouth, eyes, and head should move for each syllable. The output closely mimics real human delivery.

The simplest way to understand the difference: a traditional avatar is a fixed image you design yourself, like a gaming profile picture or an Apple Memoji. It has no intelligence, no voice, and no ability to respond. An AI avatar generates its own motion and speech from text input. It produces new video content every time it receives a new script.

AI Avatar vs Chatbot vs Digital Twin vs Virtual Assistant

These four terms show up in nearly every conversation about AI-generated digital humans, and they overlap enough that they get used interchangeably. They describe different things, though, and understanding the distinctions matters when evaluating tools and use cases.

AI Avatar | Chatbot | Digital Twin | Virtual Assistant | |

Definition | AI-powered digital human that speaks and expresses emotion through video | Text-based conversational AI that responds to typed input | Exact AI replica of a specific real person’s appearance and voice | Task-oriented AI designed to complete actions and answer questions |

Visual form | Yes, realistic or stylized human | No, text interface only | Yes, cloned from a real individual | Varies: some have a visual form, many do not |

Intelligence | Generative AI for visuals and speech; NLP for interactive versions | NLP and LLMs for text conversation | Same as AI avatar, plus identity-specific training data | NLP for commands, integrations for task execution |

Primary use | Content delivery and presentation | Conversation and support | Scaling a specific person’s presence | Task completion and information retrieval |

Example | Branded AI presenter in a product demo video | ChatGPT, Intercom chat widget | AI clone of a CEO delivering investor updates | Siri, Alexa, Google Assistant |

These categories are not mutually exclusive. A digital twin is a specific type of AI avatar, one that replicates a real person’s likeness and voice rather than using a stock or generated face. Some AI avatars serve as virtual assistants when placed in customer-facing roles where they answer questions and complete tasks in real time. And conversational AI avatars rely on the same NLP and LLM technology that powers chatbots, with a visual and voice layer built on top. The key question when choosing between these categories is whether your use case is primarily visual and presentational (AI avatar), text-first (chatbot), identity-specific (digital twin), or task-oriented (virtual assistant).

How Do AI Avatars Work?

AI avatars operate on four technology layers that work together to turn a text script into a finished video of a digital human speaking. Each layer handles a different part of the process.

The visual layer controls how the avatar looks and moves. Generative AI models, including GANs (generative adversarial networks) and diffusion models, either create a fully synthetic face or map an existing person’s likeness onto a digital model. Computer vision and deep learning algorithms analyze reference footage or images to learn how a face behaves: how the eyebrows lift during a question, how the jaw drops on certain vowels, how the head tilts when shifting between points. The output is a photorealistic or stylized digital human that can be animated from any script.

The voice layer converts the written script into spoken audio. Text-to-speech (TTS) engines produce natural-sounding speech across dozens of languages and accents. Voice cloning goes a step further, replicating a specific person’s voice from a short audio sample so the avatar sounds like the individual it represents rather than a generic AI narrator. Most AI voiceover generators now support both stock and cloned voice options.

The sync layer ties audio and visuals together. Lip-sync technology aligns the avatar’s mouth movements with each phoneme in the audio track. Facial expressions shift to match the emotional tone of the script, while hand gestures are generated automatically to support the delivery. The quality of this layer determines whether the avatar feels like a real speaker or a poorly dubbed translation.

The intelligence layer adds real-time responsiveness. Natural language processing (NLP) and large language models (LLMs) power conversational AI avatars that can answer questions, follow dialogue branches, and adjust their responses based on what a user says or asks. Not every AI avatar uses this layer. Scripted presenters, the most common format in marketing, rely on the first three layers only. Conversational avatars used in customer service or virtual reception roles need all four.

Layer | Function | Key technology |

Visual | Creates and animates the avatar’s face and body | GANs, diffusion models, computer vision |

Voice | Converts text to natural-sounding speech | Text-to-speech (TTS), voice cloning |

Sync | Aligns lip movement, expressions, and gestures to audio | Lip-sync algorithms, facial expression modeling |

Intelligence | Enables real-time conversation and response | NLP, LLMs (only for conversational avatars) |

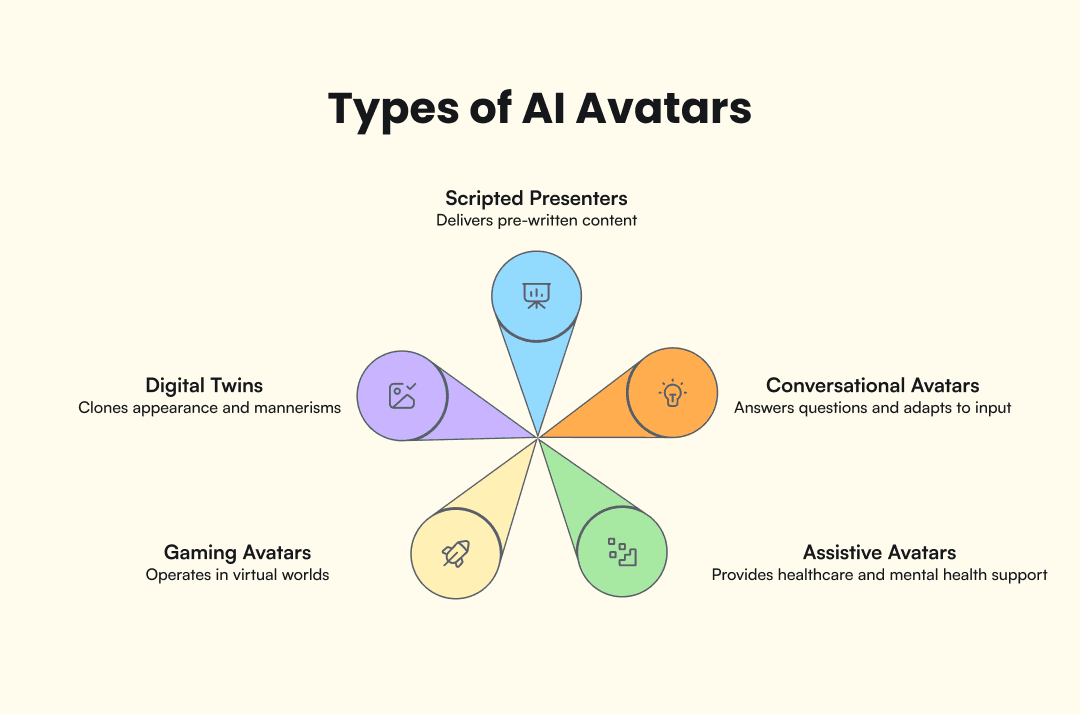

Types of AI Avatars

Scripted presenters are the most common type of AI avatar in commercial use. They receive a written script, and text-to-speech converts it to audio while lip-sync aligns the avatar’s mouth movements. The result is a finished video that looks like a real person talking to camera. Marketing teams use them for product videos, explainer content, and training materials. For marketers testing multiple ad angles, scripted presenters allow dozens of video variations from a single script set without reshooting.

Conversational avatars respond in real time instead of reading from a script. NLP powers them to answer questions, follow branching dialogue, and adapt based on user input. Customer service teams use them as virtual receptionists, onboarding guides, and FAQ handlers where a face-to-face presence adds trust without requiring a live human agent.

Digital twins clone a specific person’s appearance, voice, and mannerisms. A CEO might create a digital twin to deliver personalized investor updates at scale, or a content creator might use one to produce videos in languages they don’t actually speak. Digital twins are a subset of AI avatars, distinguished by the fact that they replicate a real, identifiable individual.

Gaming and metaverse avatars operate in virtual environments like Ready Player Me or Genies. These range from fully user-designed characters to AI-enhanced avatars that adapt their expressions and movements based on player behavior. They sit at the entertainment end of the spectrum, separate from the business-oriented categories above.

Assistive avatars serve healthcare, eldercare, and mental health support functions. Apps like Replika use AI-driven avatars as conversation companions, while hospital systems are testing avatar-based patient education tools. This category is still emerging but growing as the underlying AI models improve.

Type | Best for | How it works |

Scripted presenter | Marketing videos, training, explainers | Reads from a text script with lip-synced delivery |

Conversational | Customer service, onboarding, FAQ | Responds in real time using NLP |

Digital twin | Scaling a specific person’s presence | Clones a real individual’s face, voice, and mannerisms |

Gaming / metaverse | Virtual worlds, entertainment | User-designed or AI-enhanced characters in 3D environments |

Assistive | Healthcare, eldercare, mental health | AI companions for therapeutic or educational conversation |

Where AI Avatars Are Used

Marketing and advertising is where AI avatars deliver the most direct ROI for the businesses reading this guide. Brands need dozens of ad variations to test hooks, scripts, and creative angles, but a traditional video shoot produces two or three versions at most. AI avatars remove that production bottleneck. Marketers can produce UGC-style presenter videos, swap scripts to test different messages, and localize ads across languages by changing the voiceover without reshooting the visual. AdMove takes this further by integrating avatar creation into a full ad campaign workflow, from product research and creative strategy to script generation, video ad production, and campaign launch.

Customer service teams use conversational AI avatars as virtual agents for support, onboarding, and FAQ handling. The avatar provides a face for interactions that would otherwise be a text chat window or automated phone tree. Testing in this space consistently shows higher engagement and trust scores compared to text-only interfaces, which is why companies in banking, telecom, and insurance have been early adopters of customer-facing avatar agents.

Education and corporate training has produced the strongest documented results so far:

Teleperformance saved five days of production time and $5,000 per video while training over 380,000 employees using AI avatar-based content

Zoom created more than 200 micro-training videos with 90% faster production timelines and savings of up to $1,500 per employee

Healthcare applications are earlier-stage but growing. Hospitals and clinics test AI avatars for patient education, where a virtual presenter explains procedures or medication instructions in the patient’s native language. Multilingual delivery is a particularly strong fit here since healthcare information needs to be accessible regardless of language barriers. Mental health apps use avatar-based companions for therapeutic conversation, and accessibility-focused tools use sign language avatars to reach hearing-impaired patients.

How Much Do AI Avatars Cost?

The cost gap between AI avatar video and traditional video production is the single strongest argument for adoption. Traditional video production runs $1,000 to $50,000 per finished minute, depending on talent, location, and production complexity. AI avatar tools? Between $0.50 and $30 per minute. For simple talking-head content, that’s a cost reduction of 90% or more.

The savings extend beyond hypotheticals. Whole Life Pet, a pet food brand, reported saving $2,900 per video after switching to AI avatar production. According to 2025 data from Sprello, 63% of businesses using AI video tools reported a 58% reduction in production costs overall. Time savings track the same way. What took weeks of scheduling, shooting, and editing now takes hours from script to finished video.

If you’re evaluating platforms, expect entry-level AI avatar tools to run $20 to $50 per month, with more advanced options priced between $80 and $300 or more per month. The real question for most teams isn’t whether AI avatars are cheaper than traditional production. For most use cases, they are. The question is how quickly the subscription cost pays for itself, and for any team producing more than a few videos from product pages per month, the math tends to work out within the first billing cycle.

Traditional video | AI avatar video | |

Cost per minute | $1,000–$50,000 | $0.50–$30 |

Production time | Days to weeks (scheduling, shooting, editing) | Hours (script to finished video) |

Scalability | Each variation requires a new shoot | Unlimited variations from one script set |

Localization | Reshoot or dub per language | Swap voiceover, keep the same visual |

Monthly platform cost | N/A (per-project billing) | $20–$300+/month |

Are AI Avatars Safe? Ethics, Legal, and Trust

Consumer trust is the most immediate concern. A 2025 transparency survey by Vidjet found that 42% of U.S. consumers would boycott a brand that used AI-generated faces in advertising without disclosing it. The trust gap grows wider when you factor in the deepfake context: multiple reports estimate that the vast majority of deepfake content online involves non-consensual use of someone’s likeness. AI avatars and deepfakes use much of the same underlying technology, which means the reputational risk of using avatars without transparency is real and measurable.

The regulatory response is picking up speed. In the United States, the Take It Down Act (signed May 2025) targets nonconsensual intimate deepfakes and creates federal liability for platforms that fail to remove them. Tennessee’s ELVIS Act (2024) established legal liability for unauthorized use of a person’s voice or likeness through AI. In the EU, the AI Act requires clear labeling of any content generated or manipulated by AI, with enforcement provisions entering force in August 2026. These laws don’t ban AI avatars, but they set boundaries around consent, disclosure, and identity rights. The FTC has also warned that failing to disclose AI-generated content in advertising could constitute a deceptive practice under existing consumer protection law.

For anyone using AI avatars in marketing or communications, the practical guidance comes down to three things:

Disclose when your presenter is AI-generated

Never replicate a real person’s likeness without their written consent

Choose platforms that include ethical use guidelines

The brands getting this right are the ones treating disclosure and consent as defaults now, before regulators make them mandatory.

Top AI Avatar Tools and Platforms

The AI avatar space has a few dominant platforms, each with a different focus.

HeyGen is the scale leader with more than 85,000 customers as of 2025, over 230 stock avatars, and support for 140+ languages. It covers the widest range of use cases, from marketing videos to corporate training, and its template library makes it a fast option for producing scripted avatar content.

Synthesia focuses on enterprise and training. The Teleperformance and Zoom case studies cited earlier both used Synthesia’s platform, and its strength is producing standardized content at volume for large organizations.

AdMove takes a different approach by integrating avatar creation into a full advertising workflow. Rather than functioning as a standalone avatar generator, AdMove connects product research, creative strategy, script generation, and avatar video production into a single ad campaign pipeline, built for ecommerce and performance marketing teams.

Platform | Primary focus | Standout feature |

HeyGen | General-purpose avatar video | 230+ stock avatars, 140+ languages, largest template library |

Synthesia | Enterprise training | High-volume standardized content for large organizations |

AdMove | Ad production pipeline | Avatars integrated into full campaign workflow (research → strategy → script → video → launch) |

Conclusion

AI avatars reduce the cost and time of producing presenter-led video by 90% or more in most cases. The technology is mature enough for marketing, training, and customer service use. And the legal framework around consent, disclosure, and identity rights is catching up, with the Take It Down Act, the ELVIS Act, and the EU AI Act all setting clear rules for how synthetic media can and cannot be used.

For marketers, the practical question has moved past “what is an AI avatar” to “how do I use one to produce better ads, faster?” If you’re ready to find out, start with AdMove’s AI Avatar Maker.